NodeJS, Express, and AWS DynamoDB: if you’re mixing these three in your stack then I’ve written this post with you in mind. Also sprinkled with some Promises explanations, because there are too many posts that explain Promises without a tangible use case. Caveat, I don’t write in ES6 form, that’s just my habit but I think it’s readable anyways. The examples below are all ‘getting’ data (no PUT or POST), but I believe it’s still helpful for setting up a project. All code can be found in my github repo.

Level Set

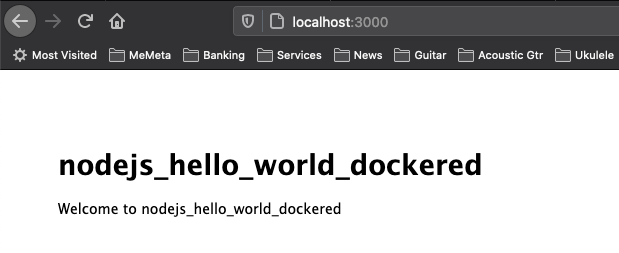

Each example in this post will be a different resource in an express+EJS app, but they’ll all look similar to the following (route and EJS code, respectively):

// routes/index.js

router.get('/', function(req, res, next) {

res.render('index', { description: 'Index', jay_son: {x:3} } );

});(Apologies for the non-styled code. You can view the routes/index.js file in my repo.)

<!-- views/index.ejs -->

<body>

<h1><%= description %></h1>

<code><%= JSON.stringify(jay_son,null,2) %></code>

</body>Index{ "x":3 }

(If any of this looks foreign or confusing, you’ll probably need to backup and study NodeJS, Express and EJS.)

Basic Promise

First up, a very simple Promise that doesn’t really do anything. But this is how it gets used:

async function simplePromise() {

return Promise.resolve({resolved:"Promise"});

}router.get('/simple_promise', function(req, res, next) {

simplePromise().then( function(obj) {

res.render('index',

{ description: 'Simple Promise', jay_son: obj });

});

});http://localhost:3000/simple_promise

Simple Promise{ "resolved":"Promise" }

The simplePromise function returns the Promise, and the value has already been computed (because it’s static data). The route accesses the value under the then() function and we pass obj to the view for rendering.

Get Single DynamoDB Item

For the rest of this post I’m using two dynamoDB tables. This is not a data model I would take to a client. It’s inefficient. Rather this data is meant for illustrative purposes only for when JSON storage is a suitable use for your tech stack:

d_bike_manufacturers //partition key 'name'

{ "name":"Fuji", "best_bike":"Roubaix3.0" }

{ "name":"Specialized", "best_bike":"StumpjumperM2" }

d_bike_models //partition key 'model_name'

{ "model_name":"Roubaix3.0", "years":[ 2010, 2012 ] }

{"model_name":"StumpjumperM2",

"years":[1993,1994,1995,1996,1997,1998,2000]

}Back to the NodeJS code:

const AWS = require("aws-sdk")

AWS.config.update({ region: "us-east-1" })

const dynamoDB = new AWS.DynamoDB.DocumentClient();

// '/manufacturer'

router.get('/manufacturer', function(req, res, next) {

var params = { Key: { "name": req.query.name_str },

TableName: "d_bike_manufacturers" };

dynamoDB.get(params).promise().then( function(obj) {

res.render('index',

{ description: 'Simple Promise', jay_son: obj }

);

});

});http://localhost:3000/manufacturer?name_str=Fuji

Single DynamoDB Item

{

"Item":{

"name":"Fuji",

"best_bike":"Roubaix3.0"

}

}Very important to note here is how the return data is wrapped within a structure under the key ‘Item’.

Consecutive DynamoDB GETs

If the ultimate database item is not immediately query-able (for some reason), then consecutive DynamoDB calls can be made. Notice how the Promises are nested. From the perspective of performance, this is not desirable and can introduce a lot of latency if this pattern is further repeated. (Some basic testing I did showed P95 times of around 100ms, server side.) The point of this demonstration is that the first Promise needs to resolve before the second can be constructed. (Best to avoid such consecutive queries, but we’re illustrating here.)

// '/manufacturers_best_bike'

router.get('/manufacturers_best_bike', function(req, res, next) {

var params = { Key: { "name": req.query.name_str },

TableName: "d_bike_manufacturers" };

dynamoDB.get(params).promise().then( function(obj) {

if (Object.keys(obj).length==0) {

res.render('index',

{ description: 'Consecutive DynamoDB Items',

jay_son: {err: "not found"}

});

return;

}

var bikeName = obj.Item.best_bike;

var params2 = { Key: { "model_name": bikeName },

TableName: "d_bike_models" };

dynamoDB.get(params2).promise().then( function(obj2) {

res.render('index',

{ description: 'Consecutive DynamoDB Items',

jay_son: obj2 }

);

});

});

});

http://localhost:3000/manufacturers_best_bike?name_str=Fuji

Consecutive DynamoDB Items

{

"Item":{

"model_name":"Roubaix3.0",

"years":[ 2010, 2012 ]

}

}Promise.all()

Promises aren’t strictly run in parallel, rather the creation of the Promise starts the respective work. Using the all() method simply waits for all to resolve and can make your code look a lot cleaner. In the resource below, we’re querying DynamoDB twice using information from the supplied query parameters.

// '/manufacturer_and_bike'

router.get('/manufacturer_and_bike', function(req, res, next) {

// Two query params

var params = { Key: { "name": req.query.name_str },

TableName: "d_bike_manufacturers" };

var params2 = { Key: { "model_name": req.query.bike_str },

TableName: "d_bike_models" };

var promises_arr = [

dynamoDB.get(params).promise(),

dynamoDB.get(params2).promise()

];

Promise.all(promises_arr).then( function(obj) {

res.render('index',

{ description: 'Promise.all() for Concurrency',

jay_son: obj } );

});

});http://localhost:3000/manufacturer_and_bike?name_str=Fuji&bike_str=Roubaix3.0

Promise.all() for Concurrency

[

{

"Item":{

"name":"Fuji",

"best_bike":"Roubaix3.0"

}

},

{

"Item":{

"model_name":"Roubaix3.0",

"years":[ 2010, 2012 ]

}

}

]Note how the items are returned in an array, and in the order of the array of Promises. One gotcha with Promise.all() is that it’s ‘all’ or nothing, and you’ll need to ‘catch’ if any one of the promises fail.

DynamoDB BatchGet

Using the AWS SDK to perform more of the data operations on the Cloud side is always a good idea. Instead of iterating over many keys, include all the keys in a single request. Note the form of the params object allowing us to query multiple tables.

// '/manufacturers' (batch get)

router.get('/manufacturers', function(req, res, next) {

var manufTableName = "d_bike_manufacturers";

var manufacturers_array = req.query.names_str.split(",");

var bikeTableName = "d_bike_models";

var bikes_array = req.query.bikes_str.split(",");

var params = { RequestItems: {} };

params.RequestItems[manufTableName] = {Keys: []};

for (manufacturer_name of manufacturers_array) {

params.RequestItems[manufTableName].Keys.push(

{ name: manufacturer_name } );

}

params.RequestItems[bikeTableName] = {Keys: []};

for (bike_name of bikes_array) {

params.RequestItems[bikeTableName].Keys.push(

{ model_name: bike_name } );

}

console.log(JSON.stringify(params,null,2));

dynamoDB.batchGet(params).promise().then( function(obj) {

res.render('index',

{ description: 'DynamoDB BatchGet', jay_son: obj }

);

});

});Output from console.log(JSON.stringify(params,null,2));

{

"RequestItems":{

"d_bike_manufacturers":{

"Keys":[ { "name":"Fuji" }, { "name":"Specialized" } ]

},

"d_bike_models":{

"Keys":[ { "model_name":"Roubaix3.0" },

{ "model_name":"StumpjumperM2" }

]

}

}

}http://localhost:3000/manufacturers?names_str=Fuji,Specialized&bikes_str=Roubaix3.0,StumpjumperM2

DynamoDB BatchGet

{

"Responses": {

"d_bike_models": [

{

"model_name": "StumpjumperM2",

"years": [

1993,

1994,

1995,

1996,

1997,

1998,

2000

]

},

{

"model_name": "Roubaix3.0",

"years": [

2010,

2012

]

}

],

"d_bike_manufacturers": [

{

"name": "Specialized",

"best_bike": "StumpjumperM2"

},

{

"name": "Fuji",

"best_bike": "Roubaix3.0"

}

]

},

"UnprocessedKeys": {}

}Summary

A little JSON can go a long way especially when building an MVP, or dealing with unstructured data. IMHO NodeJS + DynamoDB is a very powerful pairing to facilitate this data in your AWS premise.

Happy coding.