Your workplace will never be the same. Skills, workflows, and delivery methods are being permanently augmented.

The tools get better. Output accelerates. In many cases, quality dips slightly — but often not enough to matter.

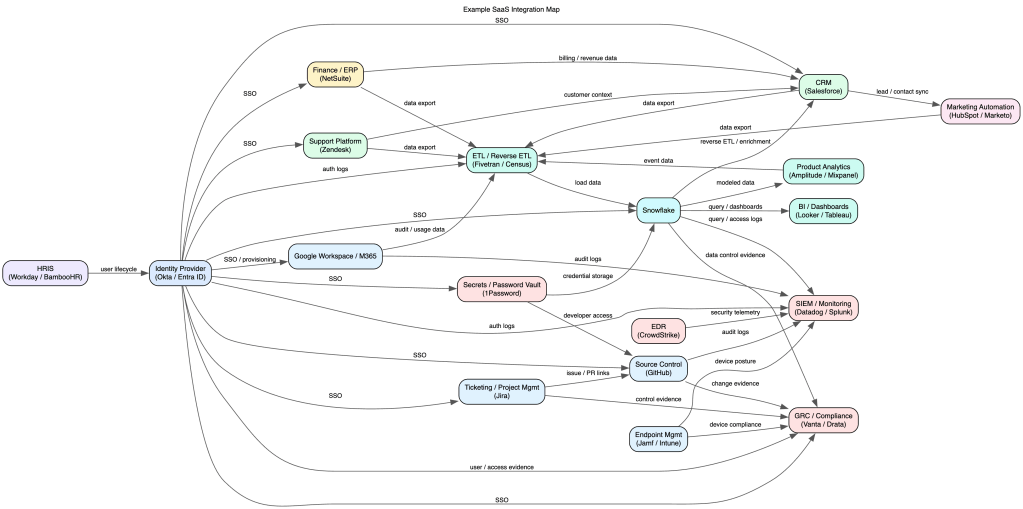

The collective graph of knowledge grows denser and more connected every day. I don’t think AGI, in the 1990s sci-fi sense, is ever arriving. But we are absolutely building something else: an epic hybrid neural system composed of humans, models, tools, and feedback loops.

I’m just one node in that system. Happy to contribute.

After spending significant time with frontier models and the emerging ecosystem around them, I’ve started noticing recurring patterns, behaviors, and phenomena that deserve names of their own. Here are a few phrases I’d propose for the new AI era.

(In no particular order.)

AI-guilt

The feeling of hesitancy to ask colleagues to review presentation decks, code or pull requests, driven by the significant boost in one’s own productivity from using AI agents and tools.

LLM-zen

(pronounced ELM-zen)

Achieving a state of flow in a work setup that leverages multiple agents, models, and providers.

vibe-scent

The passive signal a technical person gives off about their AI tooling fluency — detectable in how they talk, work, or describe their stack, without them ever stating it directly.

hype-climb

The feeling of wonderment, that with all the AI developments and cool tech, that it only gets better or more useful from here on out.

token-chasm

The wide disparity within an organization, where a small group of individuals uses and consumes orders of magnitudes more tokens then the remaining segments of company personnel.

stop-slop

The collective desire to require (by regulation or industry pressure) a ‘SLOP‘ button on any item of posted social media content, so that the human race can feel better about contributing to the identification and avoidance of bad AI slop. But also knowing (unfortunately) that this itself is a feedback mechanism which could help improve AI to make SLOP that is more difficult to identify.

cubicle-AI

AI tools or services trained and configured within a large corporation which vastly undercut the the bigger capabilities of the underlying LLM due to corporate governance and guardrails. ex. “That is a great prompt, and and answer requires detailed expertise and subject matter knowledge from within the firm.”

gen-check

The etiquette of checking and modifying generated content before distributing to colleagues. Not necessarily a heavy QA on a deliverable or final product.

token-envy

The anxiety of not burning through as many tokens as your colleagues, even when raw consumption doesn’t actually translate to more or better output — keeping up with the Joneses, but for prompt spend.

stack-thrash

When doubt about your current toolkit and envy of what others might be running leads to constant tinkering, tool-hopping, and stack-tweaking — all motion, no velocity. Could be used in contexts outside of AI.